User Tools

Sidebar

products:sbc:edge2:npu:qwen-1.8b-chat

Book Creator

Add this page to your book

Add this page to your book

Book Creator

Remove this page from your book

Remove this page from your book

This is an old revision of the document!

Table of Contents

Large Model - Qwen 1.8B Chat

This is just a testing version.

Convert Model

Build virtual environment

Follow this docs to install conda.

Then create a virtual environment.

$ conda create -n RKLLM-Toolkit python=3.8 $ conda activate npu-env #activate $ conda deactivate #deactivate

Download Tool from airockchip/rknn-llm.

$ git clone https://github.com/airockchip/rknn-llm.git

Install dependencies

$ cd rknn-llm/rkllm-toolkit/packages $ pip3 install rkllm_toolkit-1.0.0-cp38-cp38-linux_x86_64.whl

Check whether install successfully.

$ python $ from rkllm.api import RKLLM

Convert

Download Qwen-1.8B-Chat model in rknn-llm/rkllm-toolkit/examples/huggingface

$ cd rknn-llm/rkllm-toolkit/examples/huggingface $ git lfs install $ git clone https://huggingface.co/Qwen/Qwen-1_8B-Chat

Modify test.py as follows.

diff --git a/rkllm-toolkit/examples/huggingface/test.py b/rkllm-toolkit/examples/huggingface/test.py index c253fe4..406ad37 100644 --- a/rkllm-toolkit/examples/huggingface/test.py +++ b/rkllm-toolkit/examples/huggingface/test.py @@ -5,7 +5,7 @@ https://huggingface.co/Qwen/Qwen-1_8B-Chat Download the Qwen model from the above website. ''' -modelpath = '/path/to/your/model' +modelpath = './Qwen-1_8B-Chat' llm = RKLLM() # Load model

Run test.py to generate rkllm model.

$ python test.py

Model qwen.rkllm will generate in knn-llm/rkllm-toolkit/examples/huggingface.

Run NPU

Get source code

The code is rknn-llm/rkllm-runtime. You can git clone again on Edge2 or copy from PC. Then pull qwen.rkllm in rknn-llm/rkllm-runtime/example.

Modify rknn-llm/rkllm-runtime/example/build-linux.sh as follows.

diff --git a/rkllm-runtime/example/build-linux.sh b/rkllm-runtime/example/build-linux.sh index 712b3be..bc5c575 100644 --- a/rkllm-runtime/example/build-linux.sh +++ b/rkllm-runtime/example/build-linux.sh @@ -4,7 +4,7 @@ if [[ -z ${BUILD_TYPE} ]];then BUILD_TYPE=Release fi -GCC_COMPILER_PATH=~/gcc-arm-10.2-2020.11-x86_64-aarch64-none-linux-gnu/bin/aarch64-none-linux-gnu +GCC_COMPILER_PATH=aarch64-linux-gnu C_COMPILER=${GCC_COMPILER_PATH}-gcc CXX_COMPILER=${GCC_COMPILER_PATH}-g++ STRIP_COMPILER=${GCC_COMPILER_PATH}-strip

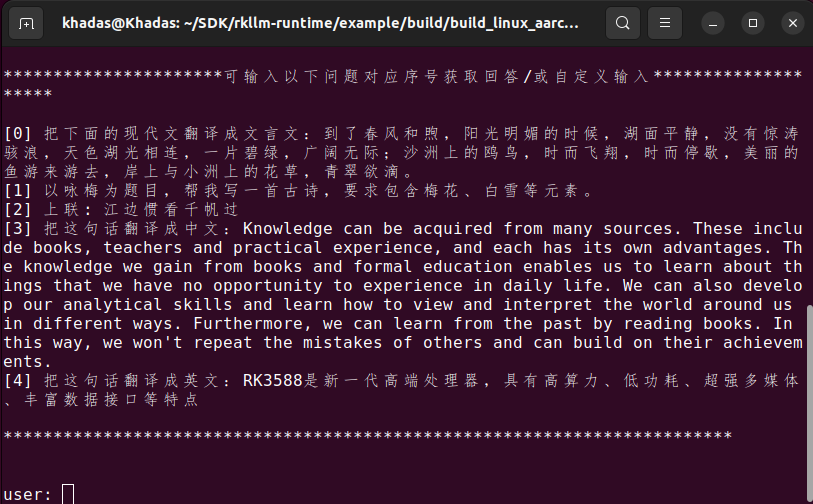

Compile and run

Last modified: 2024/04/25 03:47 by louis